In recent months, Instagram silently rolled out a new feature across the platform which limits the content that users see.

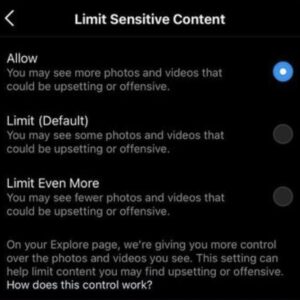

Here’s how you can find this on your account:

Here’s how you can find this on your account:

> SETTINGS

> ACCOUNT

> LIMIT SENSITIVE CONTENT

As of July 23rd, this feature wasn’t live on every account across the platform and seemed to limit the “allow” option to those over 18-years-old.

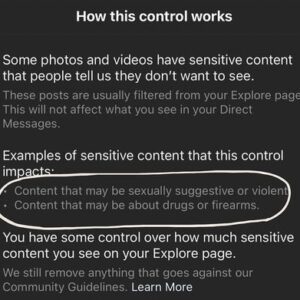

Instagram shares how this feature works:

Content that is “sexually suggestive or violent” and/or “about drugs or firearms” can be educational. This means Instagram could potentially filter out educational accounts.

Adam Mosseri, the head of Instagram, says that “this content is to be filtered out from the explore page.”

However, content creators on Instagram know that this feature has impacted beyond what is just shown on the explore page.

The reality of this feature is that if anyone who doesn’t work for a platform like Instagram tries to tell you that they understand the inner workings of Instagram/how the algorithm works, please know that they’re lying to you.

No one has a firm grasp of the algorithm or how to get content seen by a large amount of people. The best we can do is use trial and error and attempt to understand how things are working based on our own experiences using the app.

Although this feature is relatively new, Instagram’s filters aren’t. Instagram has actively been censoring content creators, especially BIPOC content creators.

Many pages see significant changes in the numbers presented on their Insights page when they post content that covers topics in the social justice space, especially when that content is centered on discussing racism.

This censoring is evident in the reach of a post, which is driven by the algorithm Instagram has set up on their end.